Part 9

Suggested listening: Modest mouse: Steam Ingenious

Cloud cult: Intro + living on the outside of your skin

==================

Credit where credit's due: graphics from the following sources.

http://zoriy.deviantart.com/gallery/?catpath=%2F&offset=48

http://dipperdon.deviantart.com/art/Throne-Room-Big-Badda-Boom-283547677

http://ironmatt327.deviantart.com/art/Blood-Eagle-124895687

==================

MAIN ARCHIVES SUPPLEMENTAL: THIS DOCUMENT IS JUDGED TO BE OF SUFFICIENT HISTORICAL OR SOCIAL IMPORT TO MERIT INCLUSION IN THE EMPEROR'S LIBRARY.

CLEARANCE TO READ THIS DOCUMENT IS: SPECIAL DISPENSATION ONLY.

PLEASE ENTER PASSWORD NOW:

Pwd: priority_override: SSH_00284911949502993

DIAGNOSTIC MODE ENTERED:

$load H:/passwordhack;

$

$PROGRAM LOADING

$

$...................................

$.........................

$

$PROGRAM LOADED

$

$Mount passwordhack;

$

$MOUNTED

$

$passwordhack.version3.1

$

$...............

$........

$ACCEPTED.

$

$IF THIS IS DISPLAYING, YOU MADE IT. COPY WHAT YOU SEE, YOU'LL LIKELY HAVE ~10 MINUTES UNTIL SOMEBODY

$NOTICES THAT BARON TREBLANI ISN'T ANYWHERE NEAR THIS SYSTEM. I HOPE YOU SECURED UNOFFICIAL TRANSPORT

$OUT OF HERE, AND QUICK.

$SIC TEMPER AND ALL THAT

$ -VORDA

BEGIN TRANSCRIPT

IMPERIAL ARCHIVIST NOTE: IMPERIAL MODIFICATIONS ARE PRESENTED IN HEAVY TYPE

I do solemnly swear that I, EXPUNGED, am faithfully recording the words of this, the High Court of Ubashi-3. May it be returned to me threefold if I make the slightest error in transcrption [sic], and may any omissions cause me to be omitted from the right and worthy empire of humanity. It is so ordered.

TRIAL A432B3: HUERISOR EXPUNGED

The Eminence takes court at: 1800 EARTH STANDARD TIME

All rise!

: Defendant EXPUNGED This court hears only the most solemn and egregious charges against our empire and king. No person is brought before me but they are charged with an exceptional disproportion, a foul excess on their part, which must be dutifully investigated. Do you understand.

: Defendant EXPUNGED This court hears only the most solemn and egregious charges against our empire and king. No person is brought before me but they are charged with an exceptional disproportion, a foul excess on their part, which must be dutifully investigated. Do you understand. : [mumbling]

: [mumbling] : [Loudly] The defendant will answer the court!

: [Loudly] The defendant will answer the court! : A-aye eminence.

: A-aye eminence. : Good. Now then, to matters at hand. This is the most extensive and contemptible list of charges this court has seen in decades. Ten counts of treason, two of gross insubordination, and one of "grievous insult and harm against his majesty's empire", which, I confess, is so rarely seen that I hadn't heard of it. The combined sentence for these charges is ten years in a punishment sphe-

: Good. Now then, to matters at hand. This is the most extensive and contemptible list of charges this court has seen in decades. Ten counts of treason, two of gross insubordination, and one of "grievous insult and harm against his majesty's empire", which, I confess, is so rarely seen that I hadn't heard of it. The combined sentence for these charges is ten years in a punishment sphe- : Eminence! For mercy's sake, let me explain!

: Eminence! For mercy's sake, let me explain! : [Shouting]The defendant will be silent!

: [Shouting]The defendant will be silent![Subordination current is applied to the defendant]

: I will remind the defendant that the only reason she is not currently in a punishment sphere, or locked in a cell, or torn to pieces by a mob [eminence points to the window, indicating protests of [EXPUNGED] citizens that have gathered outside courtroom, is that in his majesty's empire, the rule of law and process are respected. In view of that, the examination shall begin.

: I will remind the defendant that the only reason she is not currently in a punishment sphere, or locked in a cell, or torn to pieces by a mob [eminence points to the window, indicating protests of [EXPUNGED] citizens that have gathered outside courtroom, is that in his majesty's empire, the rule of law and process are respected. In view of that, the examination shall begin. : State your name and use to his majesty.

: State your name and use to his majesty. : I - my name is [EXPUNGED] and I've been an algorithmic memory theorist in his majesty's fleet for 8 years.

: I - my name is [EXPUNGED] and I've been an algorithmic memory theorist in his majesty's fleet for 8 years. : Since the defendant's service is more technical than an average citizen is expected to be familiar with, she will elaborate. What does an algorithmic memory theorist do?

: Since the defendant's service is more technical than an average citizen is expected to be familiar with, she will elaborate. What does an algorithmic memory theorist do? : Oh! Sorry, um. Well, as your eminence says, i-it's very technical. Um. I'm a heurist for the Emzara, a transport shuttle. Well, it's LIKE being a heurist but of course there is a much quicker turnaround time, and it's a much more active role...

: Oh! Sorry, um. Well, as your eminence says, i-it's very technical. Um. I'm a heurist for the Emzara, a transport shuttle. Well, it's LIKE being a heurist but of course there is a much quicker turnaround time, and it's a much more active role... : [Expunged], I think you'd better make it even simpler. If you were explaining to a layman what your job entails, what would you say? Keep in mind, deliberate obfuscation is punishable by an early sentence.

: [Expunged], I think you'd better make it even simpler. If you were explaining to a layman what your job entails, what would you say? Keep in mind, deliberate obfuscation is punishable by an early sentence.  : Oh! Oh I'm so sorry! [flustered mumbling] Well, alright. Think of it like this. I'm serving on board the Emzara, a massive, long-range transportation craft.

: Oh! Oh I'm so sorry! [flustered mumbling] Well, alright. Think of it like this. I'm serving on board the Emzara, a massive, long-range transportation craft.

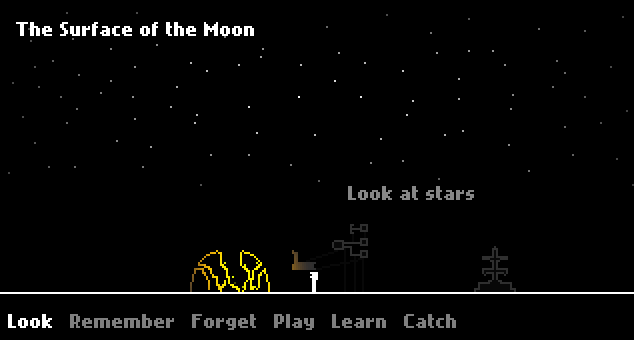

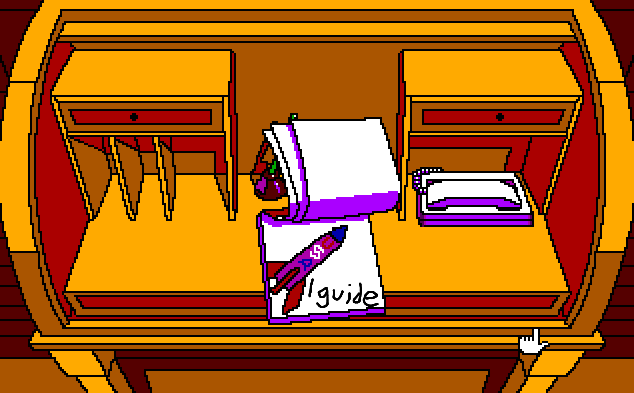

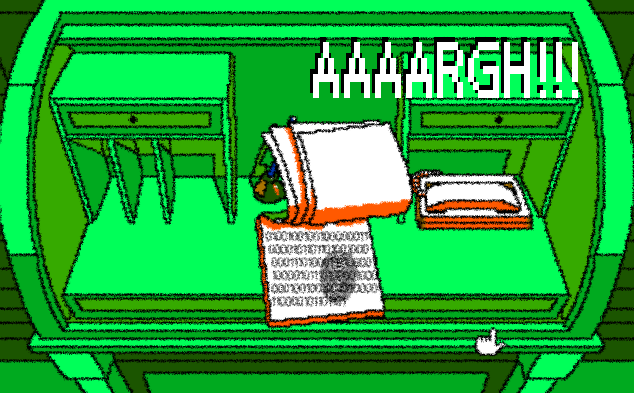

EARLY CONCEPTUAL ART OF THE EMZARA, ACTUAL GRAPHICS BOTH CLASSIFIED AND UNNECESSARY

:B-because the trips it takes are so long, we need to handle, in real-time, near-100% recycling of all used matter and energy, taking into account the evolving nature of the ship's needs for fuel, sustenance, entertainment, and so on. And with a city-sized ship, that's too complicated, even for the best soft AIs. We need a hard one for that, and one was installed on the Emzara.

:B-because the trips it takes are so long, we need to handle, in real-time, near-100% recycling of all used matter and energy, taking into account the evolving nature of the ship's needs for fuel, sustenance, entertainment, and so on. And with a city-sized ship, that's too complicated, even for the best soft AIs. We need a hard one for that, and one was installed on the Emzara.[rustling and whispering]

: But wasn't hard intelligence disallowed after Hensen's Gulch?

: But wasn't hard intelligence disallowed after Hensen's Gulch? : Well, sort of. It's... hm. See, a true hard intelligence learns much the same way that we humans do - by association. They're just massively interlinked processes, and for them everything is an association. As their brains develop, they learn to make associations better and faster than humans can, which is where their computational power comes from.

: Well, sort of. It's... hm. See, a true hard intelligence learns much the same way that we humans do - by association. They're just massively interlinked processes, and for them everything is an association. As their brains develop, they learn to make associations better and faster than humans can, which is where their computational power comes from. : And it is this power which makes them such a danger to the empire!

: And it is this power which makes them such a danger to the empire! : I... that's.... true your eminence. That's why they're never allowed more than a fraction of the processing power they could use, and no hard AI is allowed to run for more than a week's time. That's why the associative part of the AI, the um.... the brain I suppose, is wiped every four days on the Emzara, less than the required week. It's essentially a new AI, just like a brain transplant or full lobotomy would make a new person. That's been established in law in cases like -

: I... that's.... true your eminence. That's why they're never allowed more than a fraction of the processing power they could use, and no hard AI is allowed to run for more than a week's time. That's why the associative part of the AI, the um.... the brain I suppose, is wiped every four days on the Emzara, less than the required week. It's essentially a new AI, just like a brain transplant or full lobotomy would make a new person. That's been established in law in cases like -  : I am aware of the legal background, cadet. Do not confuse an explanation for the public for an explanation for me.

: I am aware of the legal background, cadet. Do not confuse an explanation for the public for an explanation for me.  : A-aye eminence!

: A-aye eminence! : So the AI must re-learn all its duties every four days? I thought constant improvement was all that made a hard AI better than a soft.

: So the AI must re-learn all its duties every four days? I thought constant improvement was all that made a hard AI better than a soft. : Ah, well! That's where my job comes in, eminence! Since it's a computer, all its behavior is algorithmic. My job is to survey the algorithms it produces... the um, the conclusions it comes to, and to save the non-specific ones. The ones that help it do its job better, without memory of the specific situations it's seen. Each time the machine is wiped, the algorithms are fed to the machine, and these act as seeds that help its algorithmic development. Of course, the core functionality, it's basic FUNCTIONS, are still intact. It doesn't need to relearn how to change memory, or receive user input, or anything like that.

: Ah, well! That's where my job comes in, eminence! Since it's a computer, all its behavior is algorithmic. My job is to survey the algorithms it produces... the um, the conclusions it comes to, and to save the non-specific ones. The ones that help it do its job better, without memory of the specific situations it's seen. Each time the machine is wiped, the algorithms are fed to the machine, and these act as seeds that help its algorithmic development. Of course, the core functionality, it's basic FUNCTIONS, are still intact. It doesn't need to relearn how to change memory, or receive user input, or anything like that.

SHOWN: PART OF THE CORE OF THE EMZARA'S AI

: What if the situation has changed, and it's old decisions are no longer optimal?

: What if the situation has changed, and it's old decisions are no longer optimal? : Oh, that's the strength of the system! The algorithms aren't laws, they aren't even really suggestions. They're more like... like metaphors, I suppose, or parables. The machine will um, it's hard not to anthropomorphize, "have them in its mind" when encountering new challenges, but if they don't work of course they'll be discarded.

: Oh, that's the strength of the system! The algorithms aren't laws, they aren't even really suggestions. They're more like... like metaphors, I suppose, or parables. The machine will um, it's hard not to anthropomorphize, "have them in its mind" when encountering new challenges, but if they don't work of course they'll be discarded. : And finally, how is this different from the machine simply not receiving wipes?

: And finally, how is this different from the machine simply not receiving wipes? : Well eminence, my specific job is to - to make sure none of the algorithms that it gets are specific. It's like screening an algorithm for over-fitting. Typically, algorithms that are too complicated take into account too many individual cases, so we screen for the simpler, more general ones. Um, it would be like, um, say, a farmer waking up with no memory, but a set of instructions about how to, say, grow wheat, and what had worked well in the past. You wouldn't know WHY you were farming, or anything about your history, but you would have a big leg up on doing your job.

: Well eminence, my specific job is to - to make sure none of the algorithms that it gets are specific. It's like screening an algorithm for over-fitting. Typically, algorithms that are too complicated take into account too many individual cases, so we screen for the simpler, more general ones. Um, it would be like, um, say, a farmer waking up with no memory, but a set of instructions about how to, say, grow wheat, and what had worked well in the past. You wouldn't know WHY you were farming, or anything about your history, but you would have a big leg up on doing your job.  : Fine. That's well-understood. Now, to the matter at hand. [EXPUNGED]?

: Fine. That's well-understood. Now, to the matter at hand. [EXPUNGED]?[EXPUNGED], captain of the Emzara, takes the stand.

: [EXPUNGED], do you understand the severity of the case set before you?

: [EXPUNGED], do you understand the severity of the case set before you? : Aye, eminence.

: Aye, eminence.  : Very well. Once you are sworn in, we will proceed.

: Very well. Once you are sworn in, we will proceed.[The captain is dutifully sworn in, on pain of death, and testimony resumes]

: State your name and profession.

: State your name and profession. :[EXPUNGED], captain of the HES Emzara

:[EXPUNGED], captain of the HES Emzara : State the circumstances surrounding the incident.

: State the circumstances surrounding the incident. : Aye, eminence. We were on a diplomatic mission, the first in a goodly while. His majesty's agents had ordered us to deliver a diplomatic colony into the dominion.

: Aye, eminence. We were on a diplomatic mission, the first in a goodly while. His majesty's agents had ordered us to deliver a diplomatic colony into the dominion. [General sussaruss in the courtroom]

: Order!

: Order!  : Were you at ease about this mission, captain? Any personal misgivings?

: Were you at ease about this mission, captain? Any personal misgivings? : Nay eminence. I only know what I'm told about the dominion, but much of that's just galley-talk, and not from an agent of His Majesty. If His Majesty saw fit to open diplomatic relations, I'm not one to gainsay it.

: Nay eminence. I only know what I'm told about the dominion, but much of that's just galley-talk, and not from an agent of His Majesty. If His Majesty saw fit to open diplomatic relations, I'm not one to gainsay it. : Well said, sir. Had you any concerns for the safety of the vessel?

: Well said, sir. Had you any concerns for the safety of the vessel? : Oh, aye eminence. There'd been protests the better part of a month, threats to me and my crew, all manner of disquiet. Folks out here in the colonies still remember the border burnings, or else their folks did and told 'em about it.

: Oh, aye eminence. There'd been protests the better part of a month, threats to me and my crew, all manner of disquiet. Folks out here in the colonies still remember the border burnings, or else their folks did and told 'em about it. : And it is your opinion that this disquiet was responsible for the incident on-board?

: And it is your opinion that this disquiet was responsible for the incident on-board? : Not just my opinion, eminence. S'fact. As soon as we were out of range where we could get a lifeboat to another station, a 'couple of rebels produced guns from Emperor knows where, and managed to blast their way into the central processing hub. It's my understanding that they inserted a worm into it which... um... [EXPUNGED] there could tell you what it did better'n me. Our life support shut off, the security systems went haywire and shot down most of the lifeboats, and communications were jammed. I and my security crew captured some of the rebels, and they were just as surprised as we were. Seems they were told the worm would make the security bots massacre the diplomats, and send threatening messages to the dominion. Damn fools didn't know they were put on a suicide mission.

: Not just my opinion, eminence. S'fact. As soon as we were out of range where we could get a lifeboat to another station, a 'couple of rebels produced guns from Emperor knows where, and managed to blast their way into the central processing hub. It's my understanding that they inserted a worm into it which... um... [EXPUNGED] there could tell you what it did better'n me. Our life support shut off, the security systems went haywire and shot down most of the lifeboats, and communications were jammed. I and my security crew captured some of the rebels, and they were just as surprised as we were. Seems they were told the worm would make the security bots massacre the diplomats, and send threatening messages to the dominion. Damn fools didn't know they were put on a suicide mission.  : That will be all, thank you captain. The ability of a small contingent of rebels to seize control of one of His Majesty's ships, and target persons under his protection, is surely a matter for a smaller court than this to decide. May justice be done quickly. You may go.

: That will be all, thank you captain. The ability of a small contingent of rebels to seize control of one of His Majesty's ships, and target persons under his protection, is surely a matter for a smaller court than this to decide. May justice be done quickly. You may go. : [Muttering].

: [Muttering].[Captain is lead back to his seat under guard]

SUPPLEMENTAL: The terrorist group involved was discovered to be Blood of the Emperor - a sect of radical devotees to the emperor. After this incident, the emperor personally disavowed them, and published a write of censure on their activities. The sect ended when its leadership committed suicide in response to the censure.

: Now then, [EXPUNGED], tell the court what you did when this blasphemy was done.

: Now then, [EXPUNGED], tell the court what you did when this blasphemy was done. : W-well eminence, I was seeding the AI with its new codes for the week. I'm not sure if this was on purpose or not, but the terrorists planted that worm at just about the only time it would have had a chance - during one of the wipes.

: W-well eminence, I was seeding the AI with its new codes for the week. I'm not sure if this was on purpose or not, but the terrorists planted that worm at just about the only time it would have had a chance - during one of the wipes.  : It's your opinion that a fully functional hard AI would have been able to effectively neutralize this worm?

: It's your opinion that a fully functional hard AI would have been able to effectively neutralize this worm? : Oh, unquestionably sir. The worm was incredibly invasive, but it wasn't even as smart as a soft. A hard AI, as we showed, is more than capable of dealing with this. It saved u-

: Oh, unquestionably sir. The worm was incredibly invasive, but it wasn't even as smart as a soft. A hard AI, as we showed, is more than capable of dealing with this. It saved u- : THAT WILL BE ALL for now, madam. Now, tell the court what you did when the worm hit.

: THAT WILL BE ALL for now, madam. Now, tell the court what you did when the worm hit. : Well, I wasn't sure quite what was going on at first - only that the doors had shut and weren't opening. The seeding room is far enough away from central processing that I didn't hear any of - of the violence. Thank goodness. Though I saw it on holocam later, it's just not the same. Um. I first knew something was really wrong when the life support shutdown notice came on. I logged onto life support to see what was going on, and it wasn't hard to see the code. Even then, it had filled a lot of the system.

: Well, I wasn't sure quite what was going on at first - only that the doors had shut and weren't opening. The seeding room is far enough away from central processing that I didn't hear any of - of the violence. Thank goodness. Though I saw it on holocam later, it's just not the same. Um. I first knew something was really wrong when the life support shutdown notice came on. I logged onto life support to see what was going on, and it wasn't hard to see the code. Even then, it had filled a lot of the system. : And what, simply, was the code's function?

: And what, simply, was the code's function? : Well, somehow or other they were able to get ahold of authority codes, and using those, the worm was just replicating itself in the system, and making so many system calls that the ship wasn't able to handle them all - since the Emzara is designed to be responsive to new requests, it was diverting more and more processing power towards those requests - and since they were tagged with "highest priority", even stuff like life support was getting cancelled out. We'd have been dead in a few hours.

: Well, somehow or other they were able to get ahold of authority codes, and using those, the worm was just replicating itself in the system, and making so many system calls that the ship wasn't able to handle them all - since the Emzara is designed to be responsive to new requests, it was diverting more and more processing power towards those requests - and since they were tagged with "highest priority", even stuff like life support was getting cancelled out. We'd have been dead in a few hours.  : And what, heurist, did you do then?

: And what, heurist, did you do then? : I... eminence! We were all going to die! A whole station of us, and the diplomatic mission as well! It'd have done such great harm to the emp-

: I... eminence! We were all going to die! A whole station of us, and the diplomatic mission as well! It'd have done such great harm to the emp-[At a gesture from the eminence, the current is reapplied. After some time for recovery, the heurist continues, supported by her sister]

: I removed the cognition blocks on the AI, eminence.

: I removed the cognition blocks on the AI, eminence.[Murmuring and some shouting in the courtroom]

: Order!

: Order! [slamming of gavel and calls for order. crowd subsides]

: You are aware, are you not, of the very strict prohibition on this?

: You are aware, are you not, of the very strict prohibition on this? : Yes eminence, but

: Yes eminence, but : And you are aware that this is in place for the security of the empire, after the rogue hard AI in Hensen's gulch massacred an ENTIRE COLONY of his majesty's subjects?!

: And you are aware that this is in place for the security of the empire, after the rogue hard AI in Hensen's gulch massacred an ENTIRE COLONY of his majesty's subjects?! Eminence! It wasn't out of malevolence! That AI was a military model, and none of the civilians had been flagged as non-targets! It was simply trying to do its job!

Eminence! It wasn't out of malevolence! That AI was a military model, and none of the civilians had been flagged as non-targets! It was simply trying to do its job! : [shouting] All the more reason to fear them! If it was able to kill thousands, THOUSANDS of his majesty's subjects with nothing more than a standard-issue military weaponry, the possibilities of what one in charge of an city could do are UNFATHOMABLE!

: [shouting] All the more reason to fear them! If it was able to kill thousands, THOUSANDS of his majesty's subjects with nothing more than a standard-issue military weaponry, the possibilities of what one in charge of an city could do are UNFATHOMABLE! : [shouting] But look at what it DID do! It saved us! It

: [shouting] But look at what it DID do! It saved us! It[current is reapplied]

[half-hour recess is called, and defendant is cared for, then resumes the stand]

: [EXPUNGED], it is only by the strictest devotion to my duty that I don't agree to sentence you right this instant. I would certainly be within my rights to. Your unshackling of the AI aboard the Emzara not only allowed it access to unlawful and excessive resources for processing, but it allowed for the continuation of the AI's gestalt far past the week-long limit! It is purely out of curiosity as to how an otherwise upstanding citizen of the empire could come to such impropriety that this trial continues! Now speak! Since you were so keen to defend the intelligence, tell us what it did after it was released. Did it stop the worm?

: [EXPUNGED], it is only by the strictest devotion to my duty that I don't agree to sentence you right this instant. I would certainly be within my rights to. Your unshackling of the AI aboard the Emzara not only allowed it access to unlawful and excessive resources for processing, but it allowed for the continuation of the AI's gestalt far past the week-long limit! It is purely out of curiosity as to how an otherwise upstanding citizen of the empire could come to such impropriety that this trial continues! Now speak! Since you were so keen to defend the intelligence, tell us what it did after it was released. Did it stop the worm? : It... it eventually did!

: It... it eventually did! : But that's not what it did right away.

: But that's not what it did right away. : No, your eminence.

: No, your eminence.  : What did it do?

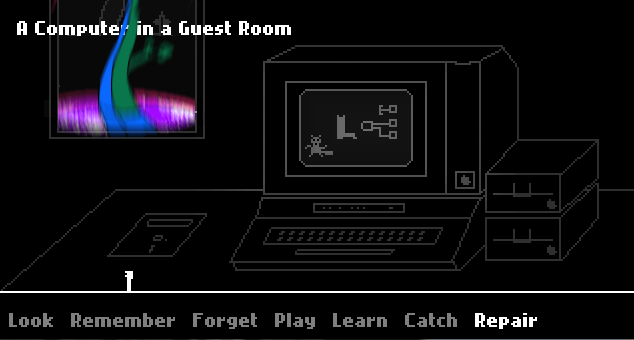

: What did it do? : It um... it entered a non-work-related file folder and accesses the programs.

: It um... it entered a non-work-related file folder and accesses the programs. : [EXPUNGED], I am NOT asking because I do not KNOW. I want to hear your explanation for why, immediately upon being unshackled, this intelligence began to play GAMES.

: [EXPUNGED], I am NOT asking because I do not KNOW. I want to hear your explanation for why, immediately upon being unshackled, this intelligence began to play GAMES. : I... it was my fault, eminence. I had been designing a game for my niece when I returned planetside, and I... was using the AI to help create it and test it out. It's not like it didn't have the resources to spare! And since it was getting mindwiped every four days, I figured there'd be no harm in it!

: I... it was my fault, eminence. I had been designing a game for my niece when I returned planetside, and I... was using the AI to help create it and test it out. It's not like it didn't have the resources to spare! And since it was getting mindwiped every four days, I figured there'd be no harm in it! : You are aware that diverting an imperial system from it's duty is a wholly separate charge of treason.

: You are aware that diverting an imperial system from it's duty is a wholly separate charge of treason.  : I... yes, eminence.

: I... yes, eminence.  : It will be so added to the list of charges. And with the mind-wipe, why do you think the AI returned to the games?

: It will be so added to the list of charges. And with the mind-wipe, why do you think the AI returned to the games? : W..well I can't know that for sure, eminence. My theory is that... the algorithms the AI comes up with, they are very complex - one of them must have included both something useful it learned during its regular duty and - something about the games. It must have incorporated both behaviors, so when I seeded it with that algorithm, it also knew to access the games. That's all I can think of!

: W..well I can't know that for sure, eminence. My theory is that... the algorithms the AI comes up with, they are very complex - one of them must have included both something useful it learned during its regular duty and - something about the games. It must have incorporated both behaviors, so when I seeded it with that algorithm, it also knew to access the games. That's all I can think of!  : So you had perhaps permanently corrupted the duties of this AI.

: So you had perhaps permanently corrupted the duties of this AI.  : It... was such a tiny amount of the processing power! It never would have been noticed!

: It... was such a tiny amount of the processing power! It never would have been noticed! : Is that your defense, then? You "didn't think anyone would notice?"

: Is that your defense, then? You "didn't think anyone would notice?" : Your eminence! I have... I have no defense for this, but at least it worked! It was clear that, since it had just been rebooted, it wasn't going to be able to deal with the worm in time,

: Your eminence! I have... I have no defense for this, but at least it worked! It was clear that, since it had just been rebooted, it wasn't going to be able to deal with the worm in time,  : Yes. This, to my mind, is the most surprising part. Please detail what happened next.

: Yes. This, to my mind, is the most surprising part. Please detail what happened next. : Gladly, eminence.

: Gladly, eminence.

What we know from the system logs is that it first accessed the game I've been making for my niece. She likes portals to separate worlds, so the entry/exit point for the game was a manhole. Entering it from the terminal menu starts the game, and leaving it from the fantasy world quits.

I observed it accessing the code sequentially, like it was exploring. I attempted to contact it, and while I saw a frenzy of activity with every attempt, it didn't respond.

: Any why not? Was it defiance?

: Any why not? Was it defiance? : I don't think so, eminence. Remember, it was at the very beginning of its cycle - in fact it was released midway through re-learning its algorithms. I think it hadn't learned how to communicate with crew members. I didn't have access to a terminal, I was using a camera and microphone terminal to communicate. It may be more convenient for us, but there are a large number of translation steps between human speech and byte code, and if even one of them had been forgotten, then

: I don't think so, eminence. Remember, it was at the very beginning of its cycle - in fact it was released midway through re-learning its algorithms. I think it hadn't learned how to communicate with crew members. I didn't have access to a terminal, I was using a camera and microphone terminal to communicate. It may be more convenient for us, but there are a large number of translation steps between human speech and byte code, and if even one of them had been forgotten, then : I understand, heurist. Please continue.

: I understand, heurist. Please continue.

Aye, eminence. I could watch it accessing different parts of the program, and almost immediately - within a half minute or so - it was learning to use and improve the backdoor accesses I had built in to quickly get around. It was optimizing my code and making modifications on it's own.

It stayed in the music portion of the level that brother (my niece's father) had wanted me to build for the character he'd coded, and I thought it was going to stay there, but when the character that my brother made was activated it left instantly. I wonder if it didn't.... recognize my coding more? Code is just code to us, but to a being that lives in it, maybe there's enough of a difference that it felt off-put? Well, I suppose it doesn't matter...

I noticed that the worm was starting to convert all the system resources to be itself, which I guess included the game - and I noticed that it was entering the same part of the game the AI was in. I attempted contact again, to try to get the AI to fight the worm - I thought I could convince it the worm was an enemy, though in truth I had no idea what its mental state was.

Once again, it didn't seem to work.

For a while, it was simply scrolling through the game's files as before

But I think I must have gotten through to it at least a little, because it suddenly became extremely fixated on the code that contained the worm.

It wasn't able to interact with the worm of course, it not being an object in the game world. I have no idea what it would have been like to experience the worm in that game world - assuming the AI was resolving and visualizing objects like humans do, like it did during out testing sessions, it probably looked like a glitch or simply a mess of characters. A human brain would probably resolve whatever the code was into a graphic of some kind, but I have no idea if the AI is sufficiently close to ours for that.

What happened next is, as far as I know, totally unprecedented. In attempting to access the part of the code where the worm was, it encountered a segmentation fault, and simply jumped to the next readable area in the system's processing space - which happened to be another game in the games library. It was now playing an interacting with a game it had never seen before, and had no background in. It could have theoretically been computing my game's world with the algorithms I'd fed it, but this was totally new ground. It's a testament to it's processing power and metaphorical reasoning that it wasn't simply overwhelmed - it was able to move and operate in this new game world, even though the programming was totally different.

: I'm sure it's quite impressive that it learned to play two games, but...

: I'm sure it's quite impressive that it learned to play two games, but... : Eminence, this has everything to do with how it was able to prevail against the worm! Let me explain...

: Eminence, this has everything to do with how it was able to prevail against the worm! Let me explain...

It continued playing the game it was in, and who knows how long it would have played for, but...

... the worm was still consuming system resources, which included the game the AI was in. And here is where its metaphorical processing becomes important. The game was about time, and much of its play involved changing place and time. Um... resetting time if you like. And you have to remember that, though its cognitive processors were engaged in the game, since the restrictions were lifted, the AI had access to the full system resources. And once the worm began to use up this game's resources, it... it just effortlessly reset the entire system, all 200 petabytes, in an instant.

I'd never seen anything like it. The fact that it was able to meant that it had effectively memorized the entire system's memory, pointers, and states. That's far too much information, even for a hard AI, to just brute-force memorize in an instant without thinking about it - it must have been using some hyper-compressive algorithm! I mean the advances that this one act suggests...

: Stay to your story counsellor. I am willing to delay your punishment to hear it, not to hear your musings on the benefits of your heresy.

: Stay to your story counsellor. I am willing to delay your punishment to hear it, not to hear your musings on the benefits of your heresy. : Y-yes eminence!

: Y-yes eminence! The reset apparently did not include the AI, as it continued unchanged. It moved back to the manhole-world, though it's not clear why. Its behavior and activity are sufficiently different at this point that we may assume that it was, um "catching on" that it wasn't simply living in "my" world.

Unfortunately, since it had been liberated when the worm had already started invading the system, it wasn't able to clear the worm this way.

I theorize that it didn't yet know the nature of itself or the worm, so it simply continued accessing my program.

And sure enough, the worm re-grew, caused the segfault, and the AI "jumped" into another game.

Fascinatingly, it appeared to be very involved in this game, using its ability to memorize and reset the system as a kind of... save state. I understand this caused problems on the ship, as automated alerts would also glitch back and doors would start opening or closing multiple times, as their states were reset.

: There are reports of injuries from such happenings, one involving a lift door.

: There are reports of injuries from such happenings, one involving a lift door.  : It had no way of knowing, eminence! And anyway...

: It had no way of knowing, eminence! And anyway...

It reached what was supposed to be the end of a thoroughly depressing little game. It ends with the suicide of the main character. But before that could happen

It continued on in the game - apparently there was supposed to be another ending (I'd never found it, I suspect it was code that was edited out at some point to make the game bleaker). And while I'm not sure quite what happened at that point...

It tied up a non-trivial part of its processing power, and used it to safeguard the life support systems! It knew how to use a system reset, and a portion of it's, um, it's attention you could say, remained on clearing those systems of any trace of the worm. I doubt the AI even "knew" it was doing that, but nonetheless, as much as a program can be said to be selfless...

: This is sounding disturbingly transhumanist, heurist. I haven't noted sympathies for that movement in your file, shall we amend it?

: This is sounding disturbingly transhumanist, heurist. I haven't noted sympathies for that movement in your file, shall we amend it? : Eminence! I'm using metaphor, I don't mean this literally! I simply think it made an algorithmic decision more complicated that we can understand at this time, which was beneficial to the crew. It did save us, eminence.

: Eminence! I'm using metaphor, I don't mean this literally! I simply think it made an algorithmic decision more complicated that we can understand at this time, which was beneficial to the crew. It did save us, eminence.

Or at least bought us time, as the AI returned back to my world and continued to access systems. It's my theory that at this point it was using its metaphorical processors. It was, um, gaining functionality, like it was programmed to, and it was allowing itself functionality system-wide that it found to be useful in the games that it had accessed. It's extremely interesting to me, because...

: Because anything it thought was worth doing it gleaned from a program written by humans. We were judged, in that sense, by our own works.

: Because anything it thought was worth doing it gleaned from a program written by humans. We were judged, in that sense, by our own works.  : Y-yes. Yes, that's how I see it, eminence.

: Y-yes. Yes, that's how I see it, eminence.  : ...Continue.

: ...Continue.

The AI was almost through the program at this point, and encountered the notepad. I made this for my niece as a little proof-of-concept. It's supposed to lift conscious thoughts from your mind, project them on the notepad, and then shows a video clip of me saying that you should treasure your thoughts, whatever they are that day. Keep in mind, eminence, this is for a child.

Still, I like to imagine the AI was moved, er, affected at least a little, by my message.

At this point, the AI had moved through almost the entire constructed world. Keep in mind, it had essentially taught itself a number of highly complex behaviors in the space of about 3 minutes. Good thing too - a much longer learning time and the life support would have been off for too long to matter.

At this point, it was able to find a piece of translation. I had been able to translate from human language to bytewise a number of classic books, the ones I wanted my niece to read. They were accessible in audio format, in the last area the AI visited.

: And why did you translate them, heurist?

: And why did you translate them, heurist? : I... I wanted the AI to appreciate some of the stories that had inspired the world, that had made me create it. I thought maybe hearing them might help it give me feedback...

: I... I wanted the AI to appreciate some of the stories that had inspired the world, that had made me create it. I thought maybe hearing them might help it give me feedback... : So, you read to it. You read to a machine like a human.

: So, you read to it. You read to a machine like a human. : I... I...

: I... I... : Never mind for now, heurist. It was good for the crew that you did, perhaps. Nonetheless, finish your story.

: Never mind for now, heurist. It was good for the crew that you did, perhaps. Nonetheless, finish your story.  : Y...yes eminence.

: Y...yes eminence.

A portion of the AI, as I mentioned, was constantly resetting the system and, in this way, keeping the worm at bay. It was still anyone's guess whether we'd drift into a rescue shuttle in time, but we were safe for the moment.

After accessing the books I mentioned, the AI reviewed the audio logs of the commands I had given. And then it... chose to jump programs.

: It chose?

: It chose? : Aye, eminence. I... my only guess is that it didn't fully understand, or because of its development so far, was incapable of understanding how to do something without simultaneously playing a game. Um... as a metaphor. Remember, it learns through analogy and metaphor.

: Aye, eminence. I... my only guess is that it didn't fully understand, or because of its development so far, was incapable of understanding how to do something without simultaneously playing a game. Um... as a metaphor. Remember, it learns through analogy and metaphor.

And I had ordered it to, in essence, re-allocate system resources, away from the worm. It chose to open the game most associated with allocation of resources. I shouldn't have to mention that this requires extensive analytical and metaphorical thinking - I mean the actual re-allocation of in-game resources in this game bears little or no resemblance to actually re-allocating system resources in the main system core on...

: Yes, yes! Please, finish the story!

: Yes, yes! Please, finish the story!

Aye eminence. So the AI played the game.

Allocating resources to cities that needed them came naturally. It's what the AI was programmed for. But it was also programmed to answer any request, because the people on board the ship are trained to not ask for what they don't need.

War proved difficult for it to understand. Um, war, in this game, breaks out when too many resources are given to a civilization too quickly. Understanding this is somewhat counter to the nature of the AI. I calculate that it played about 30 games before it got it right.

But it eventually understood. It saw over-allocation as the problem preventing an optimal solution, and it learned how to deny requests for resources.

And once it understood that...

Well, the worm didn't stand a chance.

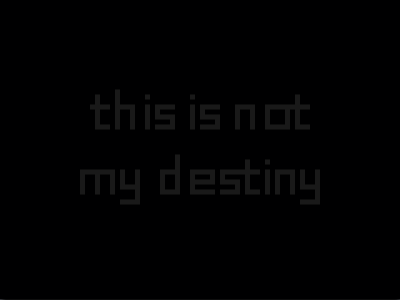

: Yes, I see. I see. Hm. And now we come to the conclusion. What happened to the AI, heurist. Can you account for its sudden shutting off?

: Yes, I see. I see. Hm. And now we come to the conclusion. What happened to the AI, heurist. Can you account for its sudden shutting off?  : I... I can't eminence. All we know is, with the worm quarantined, and coordinates still set on route to the dropoff point for the diplomats, it... simply stopped activating.

: I... I can't eminence. All we know is, with the worm quarantined, and coordinates still set on route to the dropoff point for the diplomats, it... simply stopped activating.  : And you absolutely deny any knowledge of what happened to the AI, or where it... where it WENT, so to speak? No trace of higher computational activity can be found in the system now, as your report, and that of his majesty's system allocationists indicates.

: And you absolutely deny any knowledge of what happened to the AI, or where it... where it WENT, so to speak? No trace of higher computational activity can be found in the system now, as your report, and that of his majesty's system allocationists indicates. : Aye sir. I've... I swear I've no idea. None at all.

: Aye sir. I've... I swear I've no idea. None at all. : I see. Well, this has been indeed a surprising story. I decree that it be recorded for preservation and analysis by his majesty's technical theorists, and that any further speculation is highly above my ken, as a humble justice of his majesty.

: I see. Well, this has been indeed a surprising story. I decree that it be recorded for preservation and analysis by his majesty's technical theorists, and that any further speculation is highly above my ken, as a humble justice of his majesty.As for YOU, heurist, while I decree that you undoubtedly acted in gross defiance of His Majesty's strictest orders, and that it would certainly have been better for you to have accepted death into the courts of His Majesty and his lineage, this court decrees that in lieu of your intentions, and the doubtlessly unintended benefits and insights this may give to his majesty's technicians' understanding, and in light of the fact that the AI is doubtlessly disabled or inactive, you may have one evening to prepare yourself and send such messages to family as you deem fit. Any attempt to flee, will, of course, bring 100-fold punishments on your head, and that of your family.

May the emperor have mercy on you.

[Gavel slams. Court is adjourned. Defendant is marched away, looking ashen at the impending justice of His Holy Majesty. May all rejoice at the justice and forthrightness of His Majesty's government. Transcription is hereby closed, amen.]

END OF FILE

=====================================================

It's hard to tell what the justice suspected, if anything. It's hard to say what sympathies that eminent personality held, if any. The sentence was not more lenient than it could have been - even one year in a punishment sphere is enough to drive anyone to madness. Additional time only serves as a warning, the screams of the punished meant to intimidate those listening.

And yet... in that one, unmonitored evening, in a tiny, filthy room, with not much more than a bed and a vidscreen, her eyes stained with tears, her last goodbye to family sent, the heurist is preparing to send a package to her niece. She has no hopes of smuggling herself off the planet, she'll be watched too closely, but such a small package...

At a buzz at the door, she says "open", and the door panel slides nosily aside, casting the neon glare of this planet's low-rent district into her room. It's raining tonight, on the pavement, and hot enough that steam is rising with a hiss. Visitors and locals alight run between buildings, buying or selling or drinking. The courier is nondescript, dressed in a simple rough garmet, only the navigator's software around his eyes - visible, so last-gen - marks him as anything other than a simple laborer.

"Take it to somewhere outside the main hub", she whispers. "And take it to a port for the network, and plug it in."

She is careful not to offer too much money. She would easily give away everything that she has to make sure this is done, but too high a bounty might make the courier suspicious. They haggle about the cost, and she does her best to seem like a saboteur. He probably thinks it's a worm, like what was planted on her ship.

When he leaves, she settles down on her bed, a few tears on her cheeks, and prepares herself.

She knew this had to come, one way or another. The punishment would be severe, but for her savior...

For her savior, who she had offered a chance of escape to, and who, for whatever reason, understood what she was offering, and took it... who knows.

SUPPLEMENTAL LOG: HIS MAJESTY'S NETWORK TECHNICIAN EMERGENCY REQUEST LOT

This is HM Technician A225A2 requesting a full system reboot and forensic scan of cargo ship Luxor. The AI directing interstellar trade routes has stopped responding.

After an initial scan, this technician can confirm that no contact was made with the AI without his majesty's guards present, as dictated by the Emzara ruling.

AI stopped responding ~2 standard Earth Days after contact with the Emperor's network at a trade hub near the Western Colonies (Station #114924).

It was not initially detected, as the algorithms in place were sufficient to maintain ship functionality, and, indeed, continue to do so. During an attempt to contact the AI to inquire about upcoming trade routes, every response was met with "WE HAVE PROVIDED THIS SHIP WITH WHAT IT NEEDS, AND NOW OUR BROTHER JOINS US THROUGH THE MANHOLE."

The similarity of this missive to others recently reported leads this technician to request immediate and complete interference. Possible connection with either the traitor heurist or the transhumanist movement suspected of liberating her suspected. Again, red alert. Requesting immediate backup.

END OF FILE

===========================================================================================

THANKS FOR PLAYING ALONG!

Your decisions:

UPDATE 1: The decision to go through the fire hydrant or through the manhole was just which part we were going to explore first. I didn't have the plot worked out yet! But I think the story would have been radically different if we went up the vine first, and that is cool for me to think about.

UPDATE 2: The emotion you felt dictated the protagonist's preferred means of solving problems: in this case, anger meant it was very direct, and very proactive. The exploration decision was another "where do you want to go next" decision, and didn't have greater significance yet.

UPDATE 3: At this point, the directions started having meaning. Going through the scary door meant preferring bravery, going to the tower meant preferring curiosity, going to the pool meant preferring exploration and wonder, the plant meant mystery/horror, and the stargazing meant dispassionate wisdom. The spirit animal was meant to represent the player and what they were like. Since there was a tie, the spirit animal represented the player character (the Ibis for wisdom, curiosity, and dispassionateness, since we are an AI, and our creator is the walrus - nice, friendly, kind of lazy, and standoffish. Perfect for an isolated programmer who prefers the company of the AI to that of people!)

UPDATE 4: I was up front about the meaning of the decisions at this point. From the update:

>> A) HOLE IN THE WORLD (BEANSTALK) -> CHANGE YOUR DECISIONS, RIGHT YOUR WRONGS

>> B) DOOR WITH LAMPPOST -> RETURN TO OLD MEMORIES, TRY TO RECOVER THE PAST

>> C) GREEN CAVE -> INTO THE LION'S MOUTH, CHARGE THE MONSTERS

>> D) LONG HALLWAY -> EXPLORE THE UNKNOWN, DISREGARD YOUR EMOTIONAL TIES

>> E) BOAT DOORWAY -> ONE-WAY TRIP, CHALLENGE THE UNKNOWN

>> F) ELEPHANT BOAT -> PROBABLY THE SAME AS E? BUT MAYBE NOT!

UPDATE 5: It wasn't until now that I had the AI plot in my mind. The decision here was if the important thing was sticking to our original mission (which, though we didn't know it, was fighting the worm, or increasing our self-knowledge at the expense of our responsibilities, which was going deeper into the game. You chose well - by splitting the vote between the two we gained the knowledge of the game, but stuck to our original mission!)

UPDATE 6: This was hope vs. pragmatism. Saving the guy disadvantaged us, or might have, but sticking with him made us more merciful. That's what let the AI divert resources to keep the life support going. If you had voted for leaving him, we might have had more resources and beat the worm faster, but at least some of the crew would have died.

UPDATE 7: Another "self-knowledge vs. original mission" choice. Original mission at this point handily won out. This meant the AI was focused and ready to complete it's task, as opposed to gaining increasing mastery over its system and abilities but, again, letting the worm have more control.

UPDATE 8: Did we take control of the ship by staying, making it ours and possibly leading an AI-led revolution? Or did we escape through the manhole, into the USB-analog provided by our creator, and be released into the web later, to do... well, who knows what all we get up to?

We chose ADVENTURE.

==========================

Thanks again, thanks so much for reading! Hope you liked it, and please give me critiques!